What is AI SRE?

AI SRE is the practice of applying AI, primarily large language models and specialized agents, to the work of site reliability engineering. The category covers a wide spectrum: at one end, ML-assisted alerting and chat-based log search; at the other, fully autonomous agentic platforms that detect, diagnose, decide, remediate, and audit incidents within a policy envelope. Calling all of this "AI SRE" obscures real differences in capability and risk profile, so the rest of this guide draws those distinctions explicitly.

The phrase only became common in 2024. Before that, the field was called AIOps, a 2010s term for ML-driven alert correlation and anomaly detection. AIOps worked, but it had a structural ceiling: better-ranked alerts still required a human to acknowledge, investigate, and execute the fix. AI SRE is the broader 2024+ category that adds modern LLMs, tool-use, and autonomous execution on top of the AIOps signal layer. The shift is from "AI that helps humans see problems" to "AI that does the work humans were paged for."

If you want the architectural deep-dive on the autonomous end of this spectrum, see our full guide to Agentic SRE as the operating system for autonomous reliability. AI SRE is the umbrella; Agentic SRE is one (high-leverage) implementation pattern within it.

AI SRE vs traditional SRE: where AI changes the work

The job of a site reliability engineer is fundamentally the same with or without AI: keep production healthy, manage incidents, automate toil, run blameless postmortems, evolve architecture toward resilience. AI does not replace that mandate. It changes what fraction of the work the human personally executes versus what they orchestrate.

| Activity | Traditional SRE | AI SRE |

|---|---|---|

| Alert triage | Human acknowledges, opens dashboards | Agent triages, surfaces only escalations |

| Log analysis | Human writes Splunk/Loki queries | Natural-language query, auto-summary |

| Root-cause diagnosis | 30+ minutes of dashboard hopping | Agent produces causal graph in seconds |

| Runbook execution | Human reads + executes manually | Agent executes within policy envelope |

| Postmortem drafting | ~3 hours of writing | Auto-drafted from incident timeline |

| On-call rotation | Engineer paged at 3 a.m. | Agent first; engineer for true escalations |

| Capacity planning | Quarterly spreadsheet exercise | Continuous, AI-assisted forecasting |

| Architecture review | Human-led, judgment-heavy | Stays human, AI provides priors only |

| Policy authorship | Human-led | Stays human (this becomes more of the job) |

Read top-to-bottom, the table says: routine, executable, well-understood work shifts to AI. Novel, judgment-heavy, and policy work stays with humans, and in fact becomes more of the human's job. The SRE role does not shrink. It moves up the stack.

The honest caveat. Most teams adopting AI SRE in 2026 are not running fully autonomous remediation yet. The most common starting point is AI-assisted triage and log search, which deliver value within a week, then graduating to autonomous remediation on the simplest 10–15 runbook patterns over the following quarter. That phasing is healthy; it is what trust scoring is for.

The five places AI is reshaping site reliability

Marketing pages will tell you AI is changing "everything." It is not. Five specific surfaces are where the actual leverage sits.

1Detection

LLM-aware anomaly detection that understands context, not just statistical outliers. A 5x latency spike during a known deploy is not the same as a 5x spike at 3 a.m. AI detection learns those distinctions, fires on the second case, and stays quiet on the first. Result: a 60–90% reduction in noisy pages without missing real incidents.

2Diagnosis

Causal-graph reasoning across logs, metrics, traces, and recent deploys. The agent reads the same telemetry the SRE would, in parallel, in seconds. The output is a ranked hypothesis list with provenance: which signals supported which conclusion. Eliminates the 15–30 minute "open dashboards, search logs, check Git" phase that dominates MTTR.

3Runbook execution

Agent-driven runbook execution within a policy envelope. The human writes the policy ("scale this replica set, but never beyond 20% of total in one minute"), the agent executes the action when the diagnosis matches a known pattern. Rollbacks, replica scaling, IAM rotations, certificate renewals, cache flushes, these are 60–80% of nightly pages, and they are now execution-grade automatable.

4Knowledge work (postmortems, runbooks, docs)

Auto-drafted postmortems from the incident timeline. Auto-generated runbook drafts from "what we just did to fix it." These do not replace human review, but they cut the writing time from 3 hours to 20 minutes. The compounding effect: teams that adopt AI for knowledge work actually finish their postmortems instead of skipping them, which closes the learning loop traditional SRE often loses.

5On-call

Agent-first on-call. The page goes to the agent first; only escalations reach a human. This is the highest-leverage change of the five because it directly attacks on-call burnout, the #1 cause of senior SRE attrition. Teams that ship agent-first on-call typically see 60–80% of routine pages closed without a human ever seeing them.

Notice what is not on this list: AI doesn't replace your architecture decisions, your team's culture, your error budgets, or your SLO design. Those remain human, judgment-heavy work. AI SRE is a force multiplier on the executable parts of the job, not a replacement for the judgment.

See AI SRE in action across all five surfaces, end to end.

Try Nova →The AI SRE tools landscape in 2026

The 2026 market splits cleanly into four lanes. Vendors will market themselves into all four. The architectural test below is how to actually tell them apart.

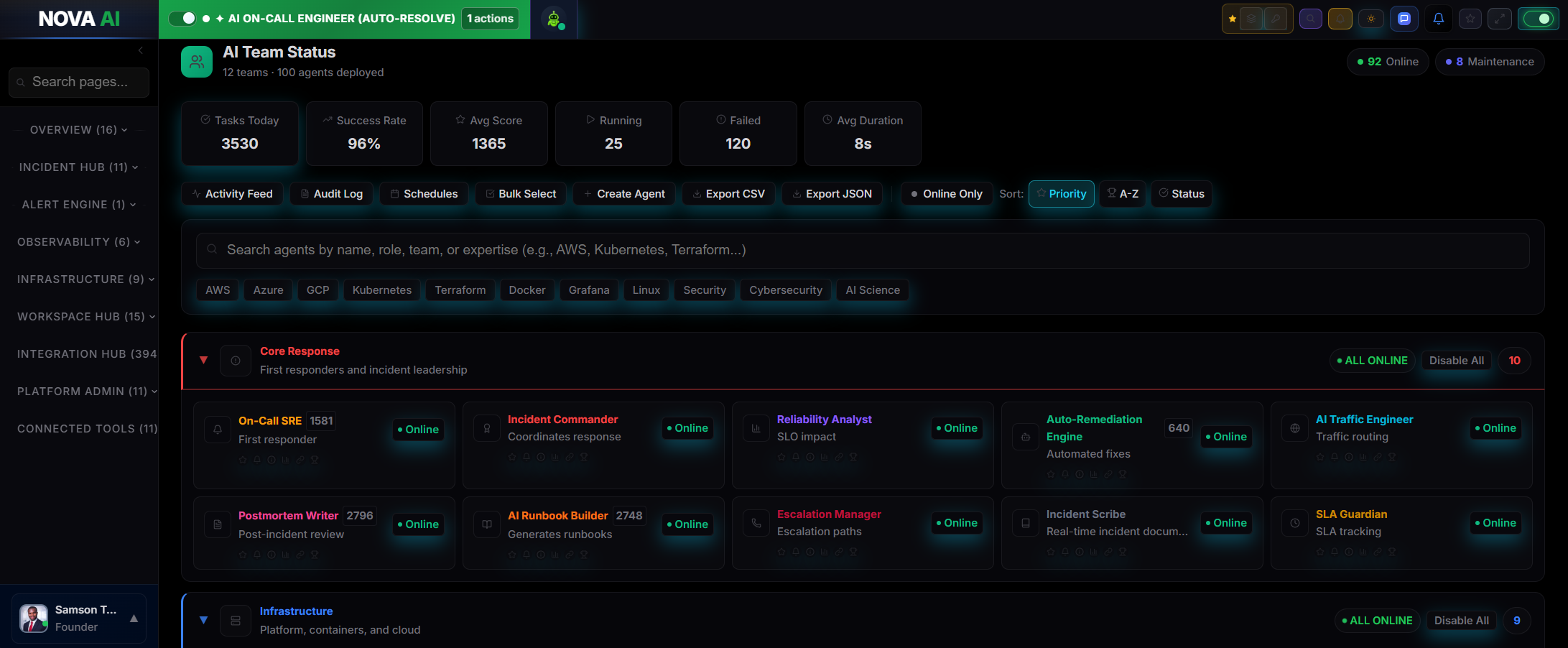

Lane 1: Agent-native platforms

Built AI-first from day one. Agents are first-class objects with identity, memory, trust scores, and bounded authority. Examples: Nova AI Ops. The architectural strength is that autonomy is granular and revocable, the audit ledger is first-class, and the platform is designed around the assumption that agents will execute against production. The tradeoff is shorter operational track record than the AIOps incumbents, so risk-averse buyers may want to start with a non-critical service.

Lane 2: AIOps-with-AI retrofits

Traditional AIOps platforms that have added LLM features on top of an existing alert-correlation engine. Examples: PagerDuty AIOps, BigPanda, Datadog AI Assistant, Dynatrace Davis CoPilot. The strength is operational maturity and broad integrations. The tradeoff is the AI is a layer, not the architecture. Agent autonomy, when it exists, is bolted on rather than built in. For many teams this is the right starting point because the rest of the platform is already integrated; for teams pursuing autonomous remediation, the architecture eventually constrains how far you can go.

Lane 3: Incident-response with AI

Modern incident-management platforms that have added AI features for triage, comms, and postmortems. Examples: incident.io, Rootly, FireHydrant. The strength is the human-coordination layer (Slack, status pages, post-incident reviews) is excellent. The tradeoff is they don't typically execute remediation; they orchestrate humans more efficiently. Often complementary to a Lane 1 or Lane 2 platform rather than a replacement.

Lane 4: Runbook automation specialists

Tools focused on the execution layer: take an alert, run a deterministic runbook, report results. Examples: Shoreline, OpsLevel automations. The strength is reliability and predictability of the runbook execution. The tradeoff is the diagnosis and decision-making layers are minimal; the runbook is selected by either rule-based matching or human approval, not by an agent reasoning over the incident state.

The right pick depends on whether you want AI as a feature on top of your existing stack (Lanes 2–3) or as the operator of a new stack (Lane 1). For a deeper architectural comparison of the two paradigms, see our breakdown of Agentic SRE vs AIOps and the architectural differences that matter.

How to evaluate an AI SRE platform: 10-point checklist

Use this in the first vendor demo. A platform that answers all 10 concretely is worth a pilot. A platform that needs to "circle back on the details" is almost certainly not as far along as the marketing claims.

- What AI tasks does it actually execute autonomously? "Surfaces insights" is not autonomous. Ask for the list of action types the platform writes against production.

- What is the trust model and revocation path? Per-agent, per-action trust scores or a single global toggle? Atomic revocation when an agent misbehaves, or only prospective?

- Which clouds and OSes are first-class? "Supports AWS, GCP, Azure, Linux, Windows" should mean a uniform intent layer, not five separate integrations with different feature parity.

- What is the audit format and retention? Can you replay an action from 90 days ago and see the prompt, plan, API calls, and outcome?

- What is the policy graph model? Policy-as-code (versioned, reviewable, rollback-able) or policy-by-prompt (jailbreakable)?

- Does the platform read or write production state? Read-only AI is advisory. Write-capable AI is operational. The risk profile is completely different.

- What is the integration surface? Does it work with the observability stack you already have, or does it require ripping it out?

- What is the cold-start time on a new service? How long before agents have enough context to make accurate decisions? Days, weeks, or never?

- How does it handle novel incidents? Does it escalate cleanly to humans, or does it improvise and write a bad action to production?

- What is the per-engineer pricing at your team size? Many platforms have step-function pricing at 25/50/100 engineers; verify against your actual roadmap, not just today's headcount.

The economics: ROI and the talent-retention math

Most AI SRE pitches lead with per-incident savings. That is the wrong frame. The two compounding levers are different.

Lever 1: Hours returned per engineer per week. A typical SRE on a busy team spends 12–25 hours per week on triage, drilldown, and routine remediation. AI SRE compresses that to 3–8 hours by automating the executable parts. The team gets back roughly the equivalent of 0.4 SREs per current SRE in capacity, without hiring. At a fully-loaded cost of $200K per SRE, that is $80K of returned capacity per engineer per year.

Lever 2: On-call attrition reduction. The dominant cost of bad on-call is not the minutes spent paging; it is that your senior SREs eventually quit. The cost to replace one senior SRE (recruiting, onboarding, time-to-productivity, and the lost institutional knowledge) is $300K–$600K. Most AI SRE platforms cost $30K–$150K per year for a 10-engineer team. The retention math alone justifies the spend if you prevent one attrition event per year.

The honest framing: AI SRE is a talent-retention tool that happens to also cut MTTR. Lead with the retention number when you make the internal case. The minute savings are easier to skeptics-question; the burnout math is not.

A 90-day AI SRE rollout plan

Tested pattern that minimizes risk while still showing value early.

Days 1–14: AI-assisted triage and chat-based log search

Read-only AI on top of your existing observability stack. No write access. Goal: get the team comfortable with AI in the loop, validate that the diagnosis quality is real, and identify the 10 most common runbooks (which become candidates for autonomous execution later). Time-to-value: roughly one week.

Days 15–45: Pilot autonomous remediation on one runbook

Pick one well-understood runbook, ideally a pod restart or replica scale, on a non-critical service. Tight policy envelope: small blast radius, business-hours only, automatic rollback if validation fails. Watch the agent's accuracy for 4 weeks. If it is at 95%+ with zero rollbacks, move to step three. If not, iterate the policy.

Days 46–75: Expand to 5 runbooks across 3 services

Once one runbook is reliably autonomous, scale across runbook types and services. By the end of this phase the agent should be closing 30–50% of routine pages without a human. The team's on-call shift should be visibly easier already.

Days 76–90: Agent-first on-call on a non-critical service

Flip on-call to agent-first on one service: pages go to the agent, escalate to humans only on failed remediation or novel incidents. This is the moment the platform's ROI becomes legible to leadership. Document the auto-resolution rate and engineer-hours returned for the quarterly review. Use that data to justify expanding to critical services in months 4–6.

Skipping any step compresses the learning curve and increases the chance of a high-blast-radius mistake. The discipline pays off later.

Frequently asked questions

What is AI SRE?

How is AI SRE different from AIOps?

What are the best AI SRE tools in 2026?

Does AI SRE replace SREs?

What is the ROI of AI SRE?

How do I evaluate an AI SRE platform?

Is AI SRE safe for production?

How long does AI SRE take to deploy?

What is the difference between AI SRE and Agentic SRE?

What metrics should I track to measure AI SRE success?

Related guides

Go deeper into the agentic reliability stack: Agentic SRE (the architecture), AIOps, AI incident response, incident management, root cause analysis, and self-healing infrastructure. On the operational metrics and practices: MTTR, alert fatigue, on-call management, and DevOps automation. On the SRE foundations: site reliability engineering, SLOs and error budgets, blameless postmortems, and chaos engineering. On telemetry and operations: observability, monitoring, the four golden signals, anomaly detection, capacity planning, runbooks, and eliminating toil. On the practice and platform: DevOps, platform engineering, CI/CD, and infrastructure as code. On the telemetry deep-dives: distributed tracing, log management, Kubernetes monitoring, microservices monitoring, and cloud cost optimization. For teams shipping AI systems: the AI engineer's guide to production reliability, LLMOps, and AI observability.

See AI SRE running on your real production telemetry.

Nova AI Ops is the Multi Agent Operating System for SRE, DevOps, and Reliability Teams. 100 specialized AI agents across 12 teams, running on AWS, GCP, Azure, Linux, and Windows. Free tier available for small teams.