What is Agentic SRE?

Agentic SRE is an architecture for site reliability in which specialized AI agents own the full operational loop, detection, diagnosis, decision, remediation, audit, and learning, while a human stays accountable for policy. It is not a dashboard with AI bolted on. It is not a single large model answering chat questions about your infrastructure. It is a population of agents, each with a narrow specialization, a trust score, and a bounded scope of authority, cooperating to keep production running at a speed no human team can match.

The "why now" is unusually sharp. Three forces collided in 2024–2025 and produced this category. First, on-call fatigue hit a structural limit. Teams did not grow with signal volume; a 30-person SRE team today ingests more telemetry than a 300-person team did in 2015, with no corresponding headcount. Second, LLM capability crossed a functional threshold, models can now execute multi-step reasoning over structured observability data, call tools deterministically, and produce runbook-quality plans in seconds. Third, the economics changed: an agent that autonomously closes an incident at 2:47 a.m. costs a fraction of the on-call premium for one senior engineer.

The category exists because the gap between what production generates and what humans can process is no longer closeable by hiring. Agentic SRE is the response: let humans do the novel, accountable, judgment-heavy work; let agents do everything else.

Agentic SRE vs AIOps vs Traditional SRE

These three terms are often used interchangeably by vendors, which obscures what actually differs. The core distinction is who owns the resolution.

| Traditional SRE | AIOps | Agentic SRE | |

|---|---|---|---|

| Detection | Static thresholds, human-authored alerts | ML-based anomaly detection, correlation | Streaming agents with context + policy |

| Diagnosis | Human-led log / metric drilldown | Suggested root causes surfaced to humans | Agent produces causal graph in seconds |

| Decision | Human decides the fix | Human decides the fix | Agent decides within a policy envelope |

| Remediation | Human runs the runbook | Human runs the runbook | Agent executes; human approves escalations |

| Audit | Postmortem doc, partially recorded | ML reasoning rarely explainable | Immutable agent ledger, replayable |

| Learning | Team retrospectives, slow | Model retraining, weeks | Runbook + trust-score updates, minutes |

| Who is on-call at 3 a.m.? | A human | A human (with better alerts) | Agents first, human for escalations |

AIOps improved the input to human operators. Agentic SRE replaces the operator for the routine cases. The practical consequence: in a traditional or AIOps setup, a noisy night still wakes someone. In an Agentic SRE setup, most pages never fire, because the incident closes before it rises to a page.

A useful litmus test. If an SRE can leave the laptop closed during a brownout in a non-critical service and the incident still resolves itself with an audit trail, the stack is agentic. If not, it is AIOps with better paint.

The six capabilities of an Agentic SRE platform

An agentic stack is not defined by having "AI" inside. It is defined by whether it ships six tightly coupled capabilities. Missing any one collapses the loop back to human-in-the-critical-path.

1Detection

Streaming signal analysis, not batch dashboards. Agents read metrics, logs, traces, and events in real time, apply context (deployments in the last 10 minutes, known-fragile services, business-hour weighting), and decide whether to act or watch. A detection event carries provenance: which signals, which baselines, which policy matched.

2Diagnosis

Root-cause reasoning across correlated signals, in seconds. The agent builds a causal graph (this deploy → this service → this dependency → this symptom) and names the probable cause with a confidence score. Good systems also produce the counter-evidence, the hypotheses considered and rejected, because a diagnosis without a rejected-alternatives list is not auditable.

3Decision

Policy-bound authority to choose a fix. The platform must have a policy graph: which agents can do what, to which services, under which conditions. A decision without a policy envelope is just improvisation. A decision with a policy envelope is an auditable action with known blast radius.

4Remediation

Execute across any cloud or OS. Scaling a replica set, rotating an IAM credential, running a runbook on a Linux host, restarting a Windows service, all of these must resolve through the same intent layer. If "remediation" only works on one cloud, it is not a remediation capability; it is a demo.

5Audit

Immutable ledger of every decision. The prompt, the plan, the API calls, the outcome, the rollback if needed, all cryptographically signed and retained. Without this, trust can't be awarded; with it, you can revoke an agent's autonomy retroactively the moment you detect a bad pattern.

6Learning

Postmortems that update agent behavior without retraining a model. The agent that just resolved a novel failure should teach its sibling agents the new runbook, the new detection pattern, the new blast-radius rule. Learning happens via policy updates and ledger replays, fast, auditable, and reversible, not via monthly model retrains.

Most "AI-powered" observability products ship one or two of these and call it agentic. Nova AI Ops ships all six as first-class surfaces: the 100-agent fleet for detection and diagnosis, Nova Shell for cross-OS remediation, and the Agent Ledger for audit and trust scoring.

Want to see the six capabilities in action, end-to-end?

Try Nova →How Agentic SRE changes the on-call role

The most common question from SRE leaders is whether agents eliminate the role. They do not. They change what the role is, which is a bigger, harder shift.

In a pre-agentic stack, an SRE's day is triage-heavy: paging, acknowledging, drilldown, runbook execution, postmortem drafting. Roughly 70–80% of the work is mechanical and repetitive, which is exactly the slice agents are good at. When you deploy Agentic SRE, that 70–80% moves to agents within the first 60–90 days. The SRE's remaining 20–30%, policy authorship, novel failure modes, cross-team coordination, architecture review, becomes 100% of the job.

Three new responsibilities appear:

- Agent orchestration. Designing the agent population, scoping each agent's authority, tuning trust scores, and revoking autonomy when an agent misbehaves. This is a design skill, not an ops skill.

- Policy engineering. Writing the blast-radius rules, escalation ladders, and approval gates. A good policy graph is the single highest-leverage artifact an SRE team produces, because it bounds what 100 agents can do to your production.

- Novel-incident ownership. Agents handle the 99% case. The 1% that is genuinely new, a new failure mode, a new dependency, a new adversarial pattern, escalates to a human. These are the incidents that teach the agent population, so they deserve the highest-skill attention.

The SRE role does not disappear; it moves up the stack. Good teams explicitly retitle the function, "Agent Reliability Engineer" or "Agent Platform Engineer" is common, to signal the shift to their own organizations. The work is more leveraged, less reactive, and objectively harder.

Why 100 specialized agents beat one general agent

A reasonable reaction on first exposure to agentic architectures is: "why not one large, smart agent?" The answer is that generality has real costs, and at the scale of a production infrastructure those costs are prohibitive.

A single general agent has no persistent identity, nothing to attach a trust score to, nothing to rate-limit. It has no accumulated context for your specific systems; every incident starts from a cold reading of dashboards. And it has no bounded scope of authority, so the only way to constrain it is a global safety net, which collapses every decision into the same blast-radius envelope.

Specialized agents flip all three. A Kubernetes agent has seen every one of your clusters, every deployment pattern, every recurring failure mode. It has its own trust score that reflects the accuracy of its past decisions specifically on Kubernetes. Its permission envelope is narrow: it cannot touch RDS, cannot rotate IAM, cannot execute against Windows hosts. When it is wrong, the blast radius is bounded by construction.

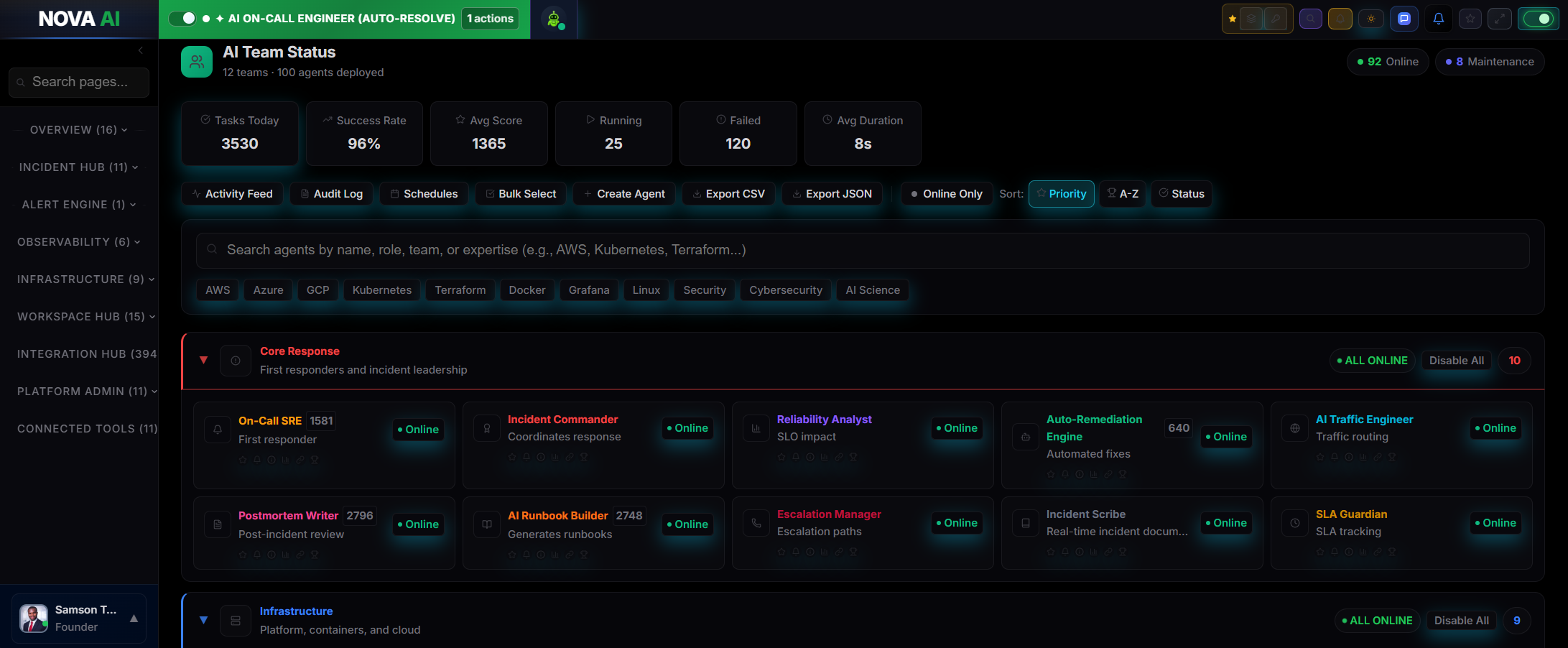

Nova AI Ops is built around 100 specialized agents across 12 teams:

The 12-team shape is not arbitrary. It maps directly to how modern infrastructure actually decomposes, the seams where one team's authority ends and another's begins. When an incident spans teams (for example, a Postgres slow-query that turns into a CDN misconfiguration), the Core Response agents coordinate across specialties without any of them needing global authority. This is the single biggest reliability win of the specialization approach: no single agent ever has enough authority to fail catastrophically.

Trust, safety, and the Agent Ledger

The objection enterprise buyers raise first, correctly, is blast radius. "What happens when an agent is wrong?" The answer lives in three mechanisms.

Trust scores. Every agent has a numeric trust score derived from its decision history. New agents start low. High-accuracy agents earn autonomy; agents that produce rollbacks lose it. The score is per-agent and per-action-type, so an agent can be trusted to restart a pod unattended while still needing human approval to rotate a database credential.

Policy envelopes. For each agent, for each action type, you set a blast-radius ceiling: number of services, number of regions, degree of irreversibility, time-of-day constraints. The ceiling is enforced at execution time, not by the agent's own judgment. An agent cannot talk its way out of its policy envelope, it is enforced by the platform, not the model.

The Agent Ledger. Every decision, prompt, plan, API calls, outcome, is written to an immutable, replayable ledger. You can audit any agent's behavior for the last 24 hours, 24 days, or 24 months. You can revoke an agent's autonomy retroactively when you detect a bad pattern, and every downstream action that inherited from that decision is flagged for review. This is what makes agents auditable in a way traditional automation is not.

Taken together, these three mechanisms let an agentic platform absorb a wrong agent decision without losing production. That is the bar. If a platform cannot demonstrate each of these, the honest description is "agent-themed" rather than agentic.

Getting started, 8 questions to ask any Agentic SRE vendor

An evaluation framework, not a sales pitch. These questions let you tell agent-native platforms from AIOps-with-a-rebrand in under an hour.

- How many agents do you ship, and what are their specializations? "One smart AI" is not an answer. Ask for the list.

- What trust-scoring model do the agents use? If every agent shares a global score, the specialization is cosmetic.

- What is the audit format, and how long is it retained? If you cannot replay a decision from 90 days ago, you do not have an audit trail.

- Which clouds and OSes are first-class? "Supports AWS, GCP, Azure, Linux, and Windows" should mean a uniform intent layer, not five separate integrations.

- How is an agent's autonomy revoked when it is wrong? Atomically, across all in-flight actions? Or only prospectively? The answer determines your blast radius in the worst case.

- What is the policy graph model? If you cannot author policy as code, you cannot version it, review it, or roll it back.

- Is the platform agent-native or AI bolted onto an AIOps stack? The tell is the data model: agent-native platforms treat agents as first-class objects with identity, state, and lineage. AIOps retrofits treat them as features on top of alerts.

- What metric should we track to know this is working? Accept "auto-resolution rate + engineer-hours returned." Reject "anomalies detected."

A platform that answers all eight concretely is worth a pilot. A platform that needs to "circle back on the details" is almost certainly not agent-native yet.

Frequently asked questions

What is Agentic SRE?

How is Agentic SRE different from AIOps?

Does Agentic SRE replace SREs?

What problems does Agentic SRE actually solve?

Why do I need 100 specialized agents instead of one smart AI?

How do I trust an agent to make production changes?

What does an Agentic SRE platform need to ship?

Can Agentic SRE work across multiple clouds?

How is Agentic SRE measured?

How do I evaluate an Agentic SRE platform?

Related guides

Continue across the reliability stack: AI SRE (the umbrella category), AIOps, AI incident response, incident management, root cause analysis, and self-healing infrastructure. On the operational metrics and practices: MTTR, alert fatigue, on-call management, and DevOps automation. On the SRE foundations: site reliability engineering, SLOs and error budgets, blameless postmortems, and chaos engineering. On telemetry and operations: observability, monitoring, the four golden signals, anomaly detection, capacity planning, runbooks, and eliminating toil. On the practice and platform: DevOps, platform engineering, CI/CD, and infrastructure as code. On the telemetry deep-dives: distributed tracing, log management, Kubernetes monitoring, microservices monitoring, and cloud cost optimization. For teams shipping AI systems: the AI engineer's guide to production reliability, LLMOps, and AI observability.

See 100 agents keep a production stack healthy, live.

Nova AI Ops is the Multi Agent Operating System for SRE, DevOps, and Reliability Teams. Detect, diagnose, remediate, and audit across AWS, GCP, Azure, Linux, and Windows. Free tier available for small teams.